Social Engineering has evolved — Has your SAT?

For most of the last decade, social engineering looked pretty predictable. Poorly written phishing email, fake login page. Scams that worked because someone clicked something they shouldn’t have.

The defenses we (the tech industry) built reflected that reality. We trained users to look for misspellings, suspicious links, strange attachments, and unexpected requests.

That advice used to work, and it’s definitely still a great foundation, but the problem is that social engineering doesn’t look like phishing anymore.

AI has fundamentally changed how attackers operate. The scams are cleaner, faster to create, and far more believable. And the techniques attackers once reserved for high-value targets are now available to anyone with a laptop and a few bucks. So, if your security awareness training still assumes attackers are sloppy, you're preparing people for the last generation of threats.

What Social Engineering Actually Is

Social engineering is a simple concept with a fancy name. Attackers exploit human trust, urgency, and authority to get people to do something they normally wouldn’t.

- Click a link.

- Share credentials.

- Approve a payment.

- Send a document.

Technology changes, but the principle stays the same. Humans are the entry point.

Historically, attackers needed some skill to pull this off. Crafting believable phishing emails or impersonation campaigns took time and effort. AI’s removed that barrier. Not to sound like an old man but the scammers don’t even need to be good or put in any effort these days!

The Old Playbook

Traditional social engineering attacks usually followed a recognizable pattern. Phishing emails were often easy to spot because they contained obvious signals:

- Poor grammar

- Strange formatting

- Generic greetings

- Suspicious domains

- Odd requests

Security awareness training evolved around those signals. Users were taught to identify the “tells”, which worked when attackers had limited tools.

But today they have access to AI that can:

- Generate perfect emails in seconds

- Research targets automatically

- Mimic writing styles

- Clone voices

- Create convincing video and audio deepfakes

The quality gap between legitimate communication and malicious communication has collapsed.

The New Reality: AI-Powered Social Engineering

AI’s dramatically lowered the cost and complexity of launching social engineering attacks. It’s not only native English speakers who can write convincing messages, and they don’t need deep technical expertise to impersonate executives or to spend hours researching targets.

AI does that work instantly. And that means we’re seeing attacks that look very different from traditional phishing. Instead of obvious scams, we’re seeing:

Hyper-personalized messages

Attackers can scrape LinkedIn, company websites, and social media to generate highly contextual messages that feel legitimate.

Voice impersonation attacks

Voice cloning technology can now replicate someone’s voice from a short audio sample.

Deepfake video impersonation

Attackers can generate convincing videos of executives asking for urgent actions.

Automated phishing at scale

AI allows attackers to run highly customized campaigns across thousands of targets simultaneously.

The barrier to entry has never been lower.

Real-World Examples

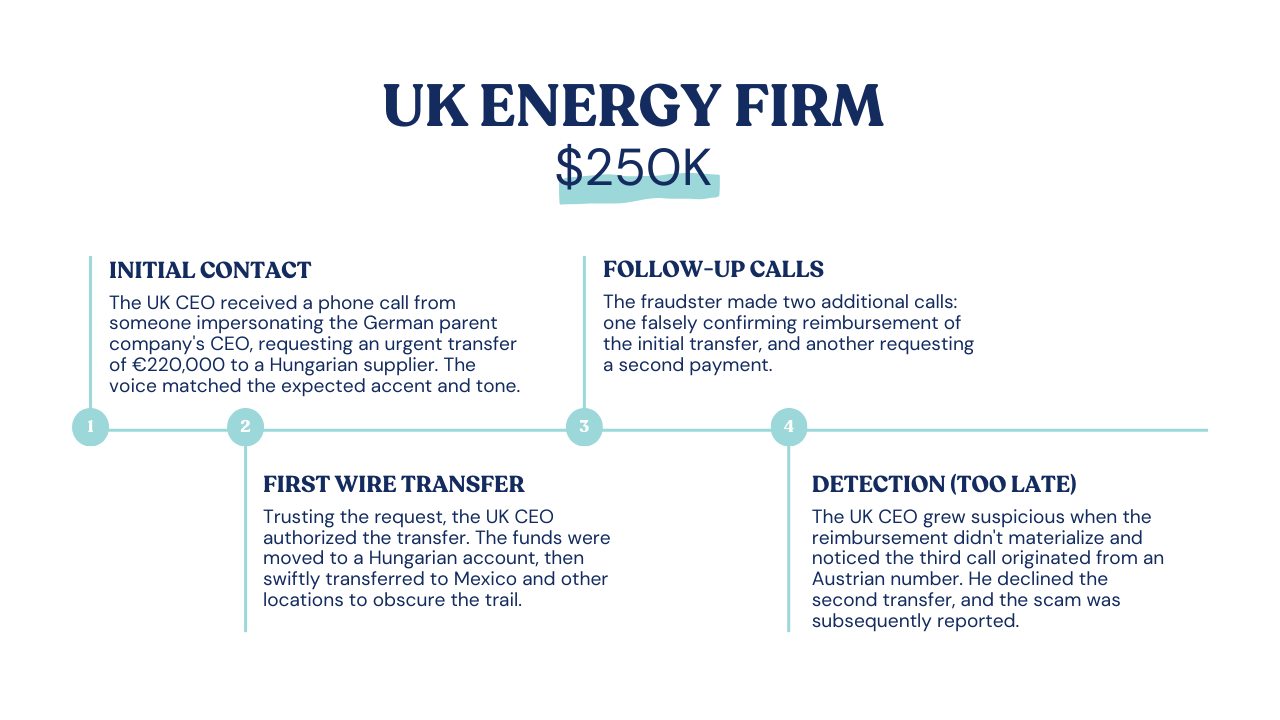

These attacks aren’t theoretical.

Organizations are already dealing with them.

One widely reported case involved attackers using AI-generated voice cloning to impersonate a company executive, convincing an employee to transfer millions of dollars - and that was over half a decade ago!

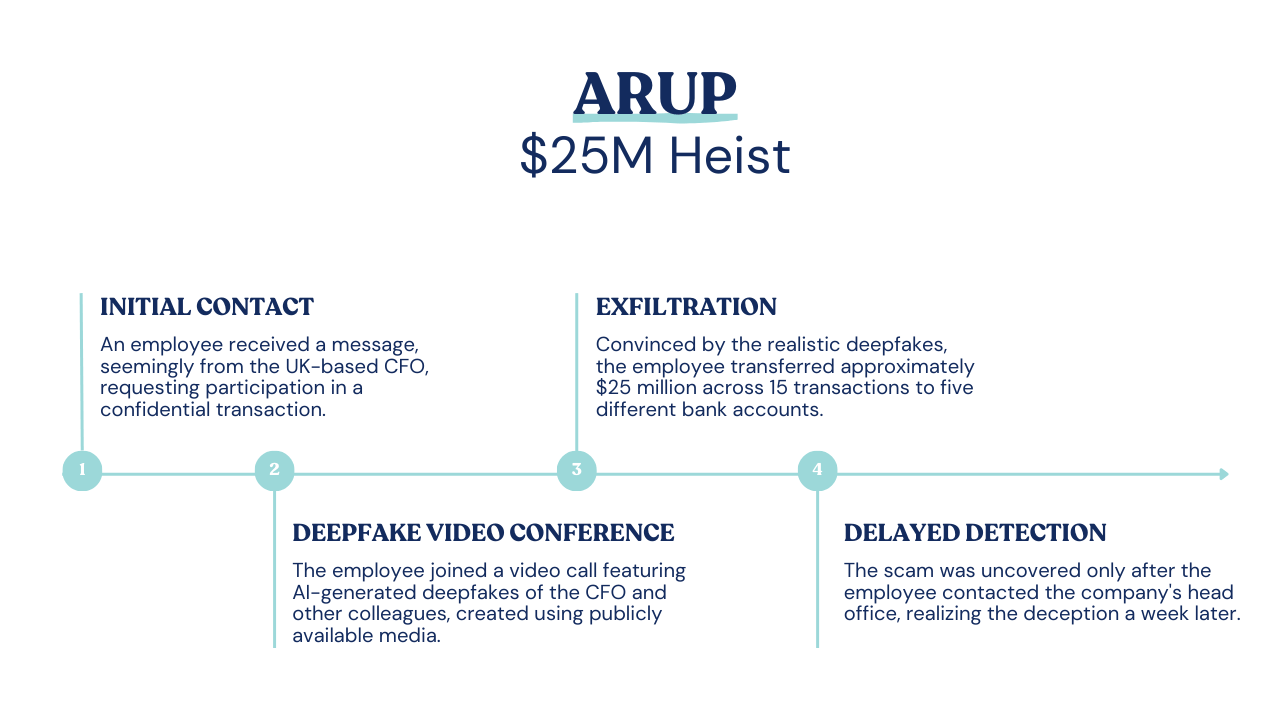

In another more recent incident, attackers used deepfake video during a video call to impersonate senior leadership, directing employees to approve fraudulent financial transactions.

These attacks worked for a simple reason- the signals employees were trained to look for were gone. There were no spelling mistakes. No strange email formatting. No obvious phishing links. Everything looked legitimate.

Why Traditional Security Awareness Training Falls Short

Most security awareness programs still train users to identify technical clues.

- Hover over links.

- Check the sender address.

- Look for suspicious attachments.

Like I said at the top of the article, those are still useful skills. But modern social engineering attacks increasingly happen outside email entirely.

They happen in:

- Voice calls

- Video meetings

- Slack or Teams messages

- SMS conversations

- Legitimate-looking platforms

The challenge isn’t spotting broken phishing emails anymore, but recognizing manipulation. And that requires a different approach to training.

What Security Teams Need to Teach Instead

If attackers are exploiting human behavior, training needs to focus on human behavior too.

From a defensive standpoint, there are four practical things organizations should be doing right now.

1. Multi-Channel Verification

Many successful social engineering attacks rely on impersonating authority figures. An employee receives a message from a “CEO” or “CFO” asking for urgent help. AI makes these impersonations more convincing than ever.

That means organizations need to normalize verification across multiple channels.

If someone requests a payment, sensitive data, or account access, verify it somewhere else. Call them back. Send a separate message. Confirm through another system.

Authority alone isn’t enough anymore.

Even if the request appears to come from leadership. In fact, especially if it appears to come from leadership.

It’s worth pointing out that this only works if leadership buys into it. If the boss has to wait a few minutes while someone verifies a request, that should be celebrated as good security behavior, not treated as an inconvenience.

And it’s good to set expectations with your employees early on. As soon as they join Phin, I give employees my cell phone number and tell them they will never receive a text from me about payments or any sensitive information. So a text from “Connor Swalm,” especially one that doesn’t come from my number, is an immediate red flag.

2. Employee Awareness Training

Hover over links. Look for spelling mistakes. Don’t download suspicious attachments.

Those things still matter, but they’re no longer the whole picture.

Modern social engineering attacks happen across email, messaging platforms, voice calls, and even video meetings. AI allows attackers to impersonate people, mimic writing styles, and create convincing deepfakes.

Training needs to evolve alongside those tactics.

Employees should understand how modern social engineering works, what manipulation looks like, and why urgency or authority are often used as pressure tactics.

The goal isn’t just spotting a bad email anymore. It’s recognizing when someone is trying to manipulate you.

3. Use Codewords for Sensitive Requests

This might sound simple, but it’s surprisingly effective.

Organizations can establish internal codewords or phrases that are required for certain types of requests, especially financial transfers or access to sensitive systems.

If someone claims to be an executive asking for an urgent payment but can’t provide the agreed codeword, that request stops immediately.

It’s a basic concept, but it creates a layer of verification that’s very difficult for attackers to bypass, especially in impersonation scenarios.

4. Detection Tools

Training and processes are critical, but they shouldn’t exist in isolation.

Organizations should also be using detection tools that can identify suspicious activity across email, messaging platforms, and collaboration software.

Modern security platforms can help flag abnormal behavior, suspicious communications, or potential impersonation attempts before they reach employees.

The goal isn’t to rely on technology alone. It’s to combine detection tools with training and verification processes so that multiple layers of defense exist.

Because when it comes to social engineering, attackers only need to succeed once.

Defenders need systems, processes, and people working together.

The Future of Social Engineering

The truth is that social engineering will only get more sophisticated. We haven’t hit the peak yet, and with the way technology develops, it’s unlikely that we ever will.

AI tools are evolving rapidly; deepfake generation is improving, voice cloning is becoming more accessible. And attackers are creative. But the fundamental weakness they exploit hasn’t changed: people trust other people.

Technology alone can’t solve that problem, but what organizations can do is build security cultures where employees feel comfortable questioning unusual requests and verifying sensitive actions.

Because of a deeper understanding of how attackers operate, rather than an inherent distrust of colleagues, everyone needs to be happy to ask the awkward questions and have those questions asked of them.

Social engineering has evolved, while most training programs haven’t.

If you're still just preparing users to detect obvious phishing emails, you’re training them for a threat that makes up a teeny tiny part of the modern landscape.

Today, it’s not enough to spot a dodgy email from a Nigerian prince looking to hide millions of dollars in your account. Now every user needs to be on the lookout for signs of manipulation - a minor in psych would probably do the trick, but in the real world, a robust and up-to-date SAT program is your best bet.

How are you prepping your users for the new world of social engineering?

Leave a comment: